RL: DeepSeek R1 vs Kimi K1.5

Suggested reading order:

Summary

In my view, these methods differ in form but are similar in spirit. The RL optimization parts of K1.5 and R1 can still be understood under a broader policy-gradient-style framework.

GRPO can be viewed this way because PPO itself belongs to the policy gradient family, and one important difference between GRPO and PPO lies in how the advantage-like training signal is constructed. In particular, GRPO replaces the usual critic-based value estimate with a relative comparison among multiple sampled outputs for the same prompt.

As for K1.5, if we break down its optimization procedure, it still contains elements such as a surrogate-style objective, an advantage-like weighting term, and gradient-based parameter updates. These are all central ingredients in policy-gradient-style optimization. Although the K1.5 authors motivate the method from policy mirror descent, it is still reasonable, from an optimization perspective, to understand it together with policy-gradient methods within a broader common framework.

Multiple Samples for the Same Question

At the same time, both K1.5 and GRPO involve sampling multiple outputs for the same prompt. These samples are then used to construct quantities such as relative advantages or baselines, which avoids the need to train and maintain a separate critic model, that is, a value function.

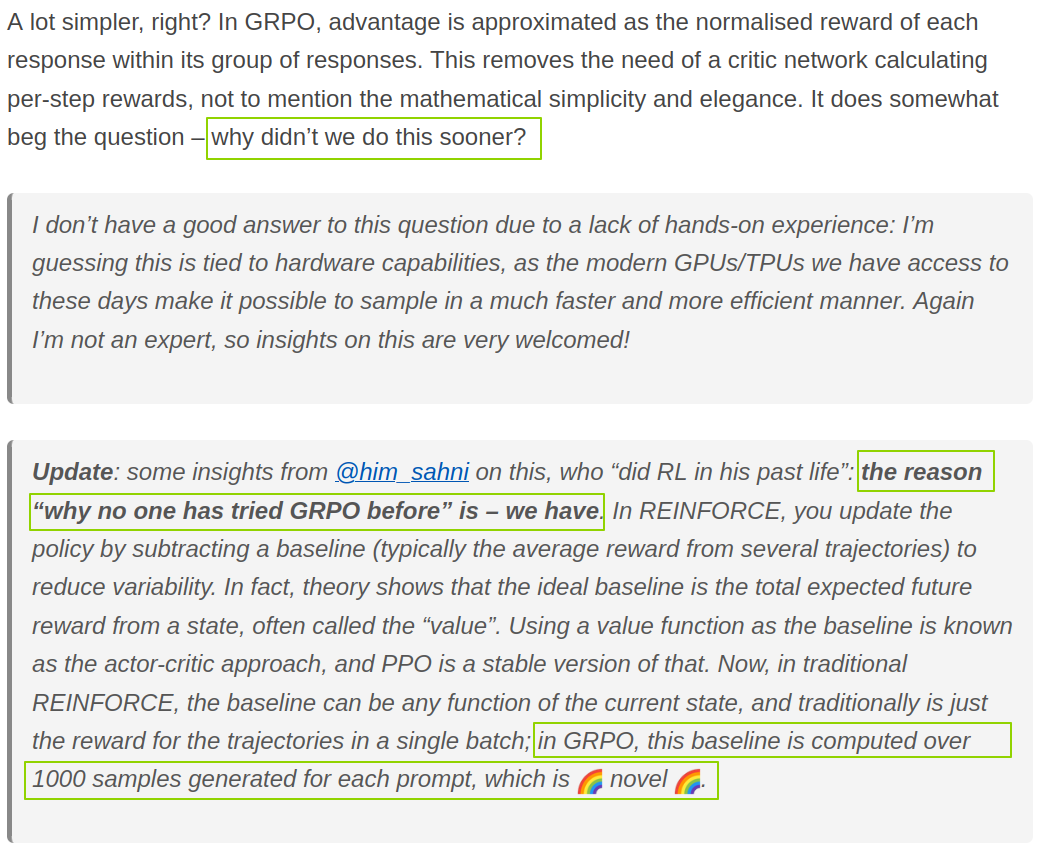

This is one reason GRPO is often viewed as an innovation: instead of relying on a learned value baseline, it derives a baseline-like signal by comparing multiple sampled outputs for the same question.

From the Perspective of Its Components

| Policy Gradient | PPO | GRPO | Kimi K1.5 | |

|---|---|---|---|---|

| policy model \(\pi_\theta\) | Required. This is the model being optimized. | Required. This is the model being optimized. | Required. This is the model being optimized. | Required. This is the model being optimized. |

| reference policy model \(\pi_{\theta_k}\) | Not required. | Required. | Required. | Required. |

| reward model | Required. | Required. | Required. | Required. |

| value function \(V_{\phi_k}\) (critic model) | Required. It estimates the expected future return, and methods such as GAE use it to compute the advantage. It must be continuously updated and kept accurate. | Required. It estimates the expected future return, and methods such as GAE use it to compute the advantage. It must be continuously updated and kept accurate. | Not required, because the computation of the advantage avoids this path. | Not required. Since the gradient can be written explicitly, the baseline-like term can be approximated by the sample mean. |

| rewards-to-go \(\hat{R}_t\) | Required, for updating the value function. | Required, for updating the value function. Exactly how the reward is distributed back to each token depends on the implementation. | Not needed, since there is no critic. | Not needed, since there is no critic. |

From the Perspective of the Key Computations

| Policy Gradient | |

|---|---|

| objective function | \(J(\pi_\theta)=\mathbb{E}_{\tau\sim\pi_\theta}[R(\tau)]\) |

| surrogate objective function | None |

| gradient | \(\nabla_\theta \log \pi_\theta \cdot A^{\pi_\theta}\) |

| advantage | \(A^{\pi_\theta}(s,a)=Q^{\pi_\theta}(s,a)-V^{\pi_\theta}(s)\) |

| PPO | |

|---|---|

| objective function | \(J(\pi_\theta)=\mathbb{E}_{\tau\sim\pi_\theta}[R(\tau)]\) |

| surrogate objective function | \[L(\theta)=\mathbb{E}_t\left[\min\left(\frac{\pi_\theta(a_t\mid s_t)}{\pi_{\theta_{\mathrm{old}}}(a_t\mid s_t)}A_t,\ \operatorname{clip}\left(\frac{\pi_\theta(a_t\mid s_t)}{\pi_{\theta_{\mathrm{old}}}(a_t\mid s_t)},1-\epsilon,1+\epsilon\right)A_t\right)\right]\] |

| gradient | No closed-form expression; computed numerically |

| advantage | \(A^\pi(s,a)=Q^\pi(s,a)-V^\pi(s)\) (typically estimated with GAE) |

| GRPO | |

|---|---|

| objective function | \(J(\pi_\theta)=\mathbb{E}_{\tau\sim\pi_\theta}[R(\tau)]\) |

| surrogate objective function | \[L(\theta)=\mathbb{E}_{o}\left[\min\left(\frac{\pi_\theta(o\mid q)}{\pi_{\theta_{\mathrm{old}}}(o\mid q)}A_o,\ \operatorname{clip}\left(\frac{\pi_\theta(o\mid q)}{\pi_{\theta_{\mathrm{old}}}(o\mid q)},1-\epsilon,1+\epsilon\right)A_o\right)\right]\] (ignoring the KL term) |

| gradient | No closed-form expression; computed numerically |

| advantage | \[\hat A_i=\frac{r_i-\bar r}{\operatorname{std}(\{r_1,\ldots,r_G\})}\] (multiple samples for the same question; no critic model; no GAE) |

| Kimi K1.5 | |

|---|---|

| objective function | \[\max_\theta\ \mathbb{E}_{(y,z)\sim\pi_\theta}[r(x,y,y^*)]-\tau\,\mathrm{KL}\big(\pi_\theta(x)\,\|\,\pi_{\theta_i}(x)\big)\] |

| surrogate objective function | \[L(\theta)=\mathbb{E}_{(x,y^*)\sim\mathcal D}\left[\mathbb{E}_{(y,z)\sim\pi_{\theta_i}}\left[\left(r(x,y,y^*)-\tau\log Z-\tau\log\frac{\pi_\theta(y,z\mid x)}{\pi_{\theta_i}(y,z\mid x)}\right)^2\right]\right]\] |

| gradient | \[\frac{1}{k}\sum_{j=1}^{k}\left[(r(x,y_j,y^*)-\bar r)\cdot\nabla_\theta\log\pi_\theta(y_j,z_j\mid x)-\frac{\tau}{2}\nabla_\theta\left(\log\frac{\pi_\theta(y_j,z_j\mid x)}{\pi_{\theta_i}(y_j,z_j\mid x)}\right)^2\right]\] |

| advantage | \(r-\bar r\) (multiple samples for the same question; no critic model; no GAE) |